Filters:

Clear AllFilter Label Tag

May 14, 2026

6 min read

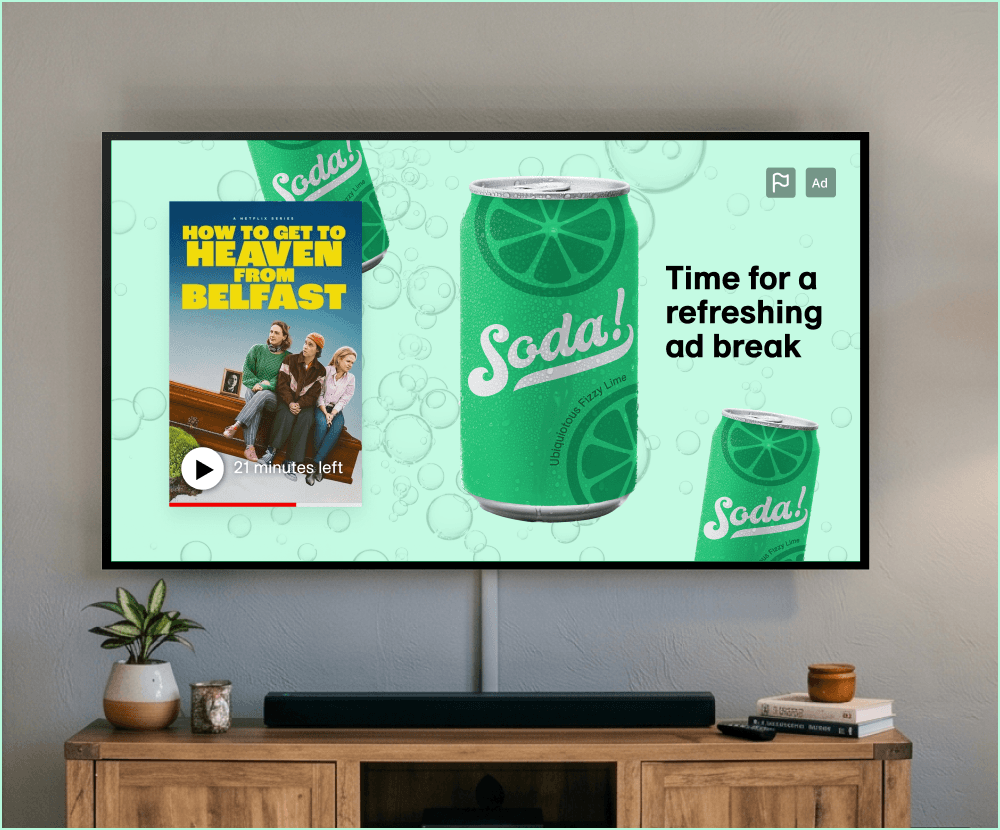

The Reinvention of CTV: 2026 12 Realities for Programmatic and Measurement

LiveRamp

Clean Rooms

Marketing

TV Advertising

May 7, 2026

Expanding the Most Powerful Data Collaboration Network with Premium New Destinations

Matthew Hogg

Identity

Data Collaboration

Marketing

Marketing Measurement

TV Advertising

April 30, 2026

Breaking the Auto Bubble: How to Reach More Buyers with Identity Resolution

Sonam Katari

Data Collaboration

Identity

Marketing

April 24, 2026

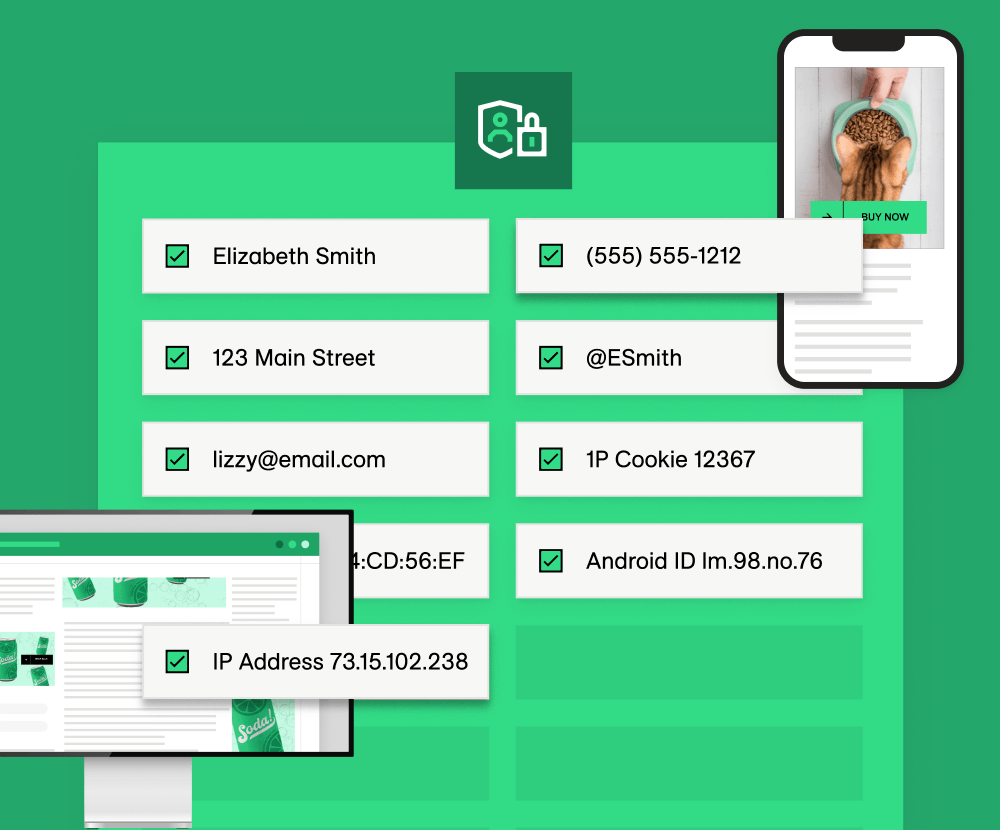

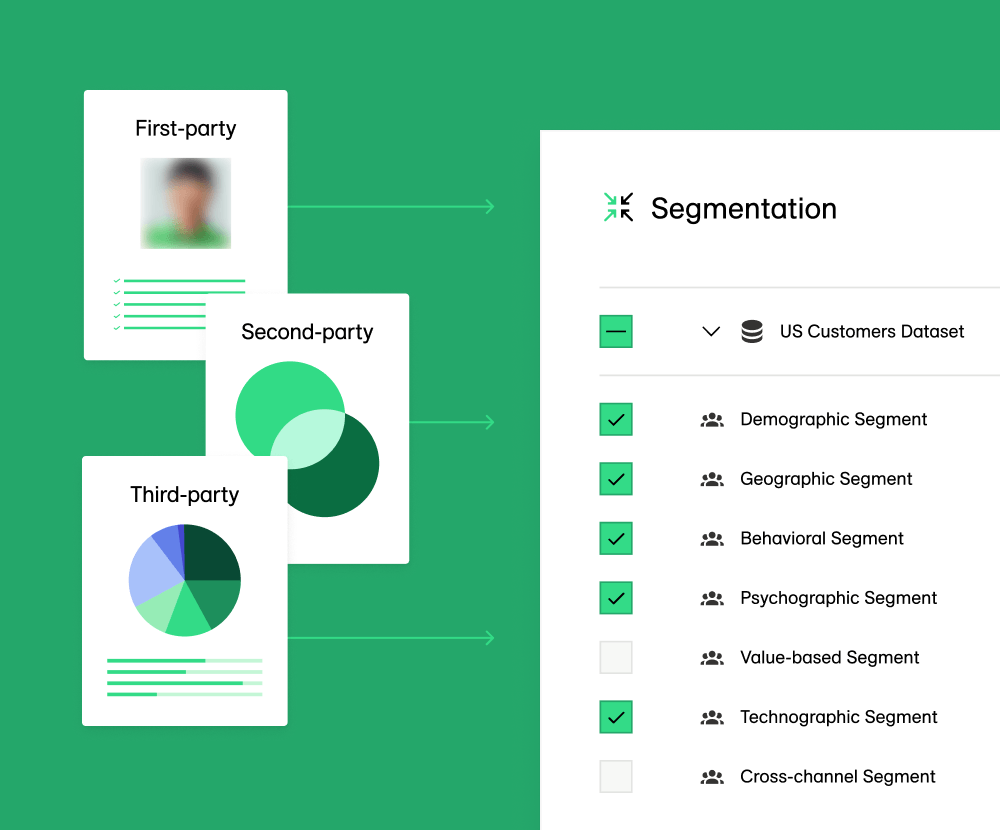

Take Control of Your Marketing Strategy: What is Audience Planning and Customer Data Segmentation?

LiveRamp

No items found.

April 23, 2026

Maximizing 2026 Upfront Commitments: Transitioning from Reach to Verifiable Outcomes

Ryan Rolf

TV Advertising

April 17, 2026

What is Customer Data Enrichment?

LiveRamp

No items found.

April 16, 2026

Symphony Health, an ICON plc company, and LiveRamp: Scaling Reach and Measurement in a Fragmented Media Landscape

Stephen Fugedy

Data & Analytics

Data Collaboration

Identity

Liveramp Partnerships

Marketing

.jpg)

April 6, 2026

The LiveRamp Clean Room and the Need for Speed

Chetan Urkudkar

Engineering & Technology

Thank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

Subscribe to Beyond the Signal

Make sense of what’s next in marketing. Every month.

.png)